We show that by investigating the feature entropy of units on only training data, it could give discrimination between networks with different generalization ability from the view of the effectiveness of feature representations. According to the Boltzmann equation, entropy is a measure of the number of microstates available to a system. Moreover, the entropy of solid (particle are closely packed) is more in comparison to the gas (particles are free to move). Other units include the nat, which is based on the natural logarithm, and the decimal digit, which is based on the common logarithm. Entropy is a thermodynamic function that we use to measure uncertainty or disorder of a system. A common unit of information is the bit, based on the binary logarithm. Since Abid and Abdullrazak (2017)1,2 suggested unit truncated H-G family of distributions, a lot of published papers based on this family to derive new. Further, we show that feature entropy decreases as the layer goes deeper and shares almost simultaneous trend with loss during training. The choice of logarithmic base in the following formulae determines the unit of information entropy that is used. In this way, feature entropy could provide an accurate indication of status for units in different networks with diverse situations like weight-rescaling operation. Unit status is indicated via the calculation of a defined topological-based entropy, called feature entropy, which measures the degree of chaos of the global spatial pattern hidden in the unit for a category. To this end, we propose a novel method for quantitatively clarifying the status of single unit in CNN using algebraic topological tools. However, it is still challenging to reliably give a general indication of unit status, especially for units in different network models. These high school chemistry worksheets are full of pictures, diagrams, and deeper questions covering Gibbs free energy and entropy This shorter unit is. K is equal to 1.38 times 10 to the negative 23rd joules per kelvin. We can get the units for entropy from the Boltzmann constant, K. What does EU mean EU stands for Entropy Unit (also European Union and 482 more) Rating: 1. Since we started with zero entropy at zero kelvin, and the entropy increases, at all temperatures that are greater than zero kelvin, the entropy must be greater than zero, or you can say the entropy is positive.

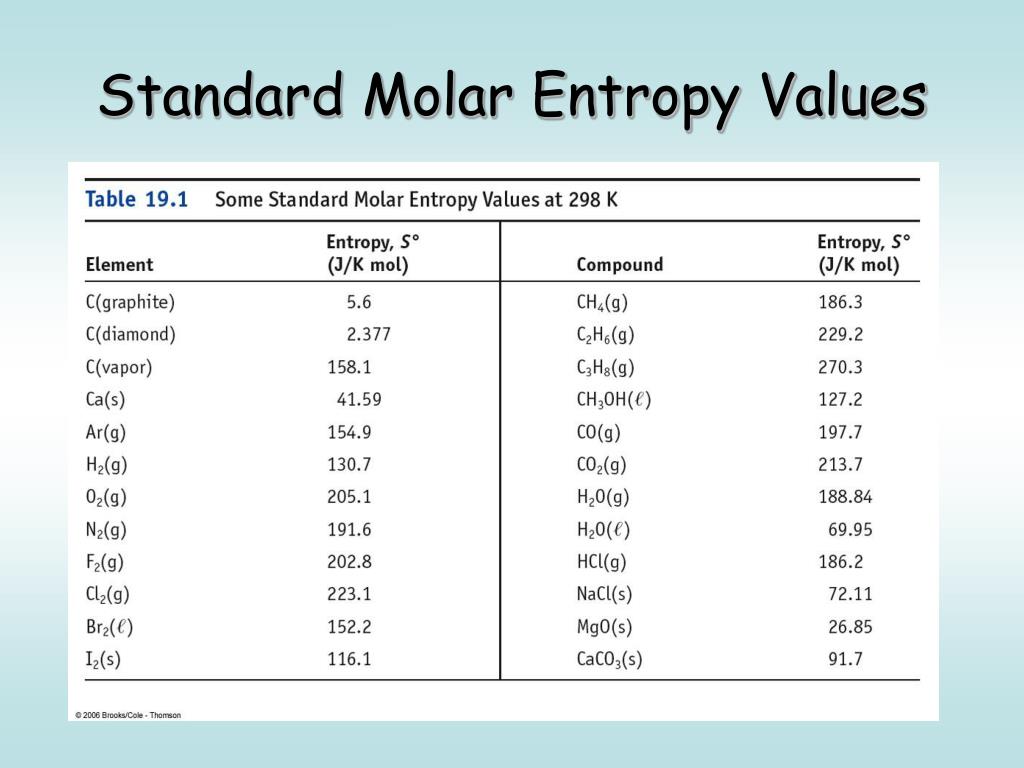

I hope I make sense.Abstract: Identifying the status of individual network units is critical for understanding the mechanism of convolutional neural networks (CNNs). Abbreviation is mostly used in categories: Medical Healthcare Health. Is $T$ is the kinetic energy the $C$ relates to potential energy. That is, an element in its standard state has a definite, nonzero value of S at room temperature. Unlike standard enthalpies of formation, the value of S is absolute. You have to remember that the entropy change is calculated in energy units of joules, but G and H are both measured in kJ. If we for the same amount of heat quantity get one sample to have more temp than another sample it means that for the first one more energy is stored in the system than second. The standard molar entropy at pressure is usually given the symbol S, and has units of joules per mole per kelvin (Jmol 1 K 1 ). Although heat capacity is found by known relationships does not mean they are based on each other. When heat is removed, the entropy decreases, when heat is added the entropy increases. Does the velocity depend on position, NO! the fact that the conservation law allows to relate two (measurable) quantities does not mean those two are affecting each other. Interesting, right? In $(1)$, the whole $T$ multiplies the infinitesimal $\frac$.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed